03 : Who made it

Who's behind ChatGPT's development?

OpenAI was founded in San Francisco in 2015 by Sam Altman, Reid Hoffman, Jessica Livingston, Elon Musk, Ilya Sutskever, Peter Thiel and others, who collectively pledged US$1 billion. Musk resigned from the board in 2018 but remained a donor. Microsoft provided OpenAI LP with a $1 billion investment in 2019 and a second multi-year investment in January 2023, reported to be $10 billion.

What is OpenAI's goal?

Some scientists, such as Stephen Hawking and Stuart Russell, have articulated concerns that if advanced AI someday gains the ability to re-design itself at an ever-increasing rate, an unstoppable "intelligence explosion" could lead to human extinction. Co-founder Musk characterizes AI as humanity's "biggest existential threat." Seeking to mitigate the inherent dangers of Artificial Intelligence, OpenAI's founders structured it as a non-profit so that they could focus its research on making positive long-term contributions to humanity.

Growth, Potential and Controverises

Musk posed the question: "What is the best thing we can do to ensure the future is good? We could sit on the sidelines or we can encourage regulatory oversight, or we could participate with the right structure with people who care deeply about developing AI in a way that is safe and is beneficial to humanity." Musk acknowledged that "there is always some risk that in actually trying to advance (friendly) AI we may create the thing we are concerned about"; nonetheless, the best defense is "to empower as many people as possible to have AI. If everyone has AI powers, then there's not any one person or a small set of individuals who can have AI superpower." Musk and Altman's counter-intuitive strategy of trying to reduce the risk that AI will cause overall harm, by giving AI to everyone, is controversial among those who are concerned with existential risk from artificial intelligence. Philosopher Nick Bostrom is skeptical of Musk's approach: "If you have a button that could do bad things to the world, you don't want to give it to everyone." During a 2016 conversation about the technological singularity, Altman said that "we don't plan to release all of our source code" and mentioned a plan to "allow wide swaths of the world to elect representatives to a new governance board". Greg Brockman stated that "Our goal right now... is to do the best thing there is to do. It's a little vague."

Microsoft is the biggest stake-holder in OpenAi

Microsoft is the biggest stake-holder in OpenAi

Sam Altman, founder of OpenAi, & Satya Nadella, Microsoft Chief Executive

Sam Altman, founder of OpenAi, & Satya Nadella, Microsoft Chief Executive

Greg Brockman, co-founder and CTO; Ilya Sutskever, co-founder and chief scientist; and Dario Amodei, research director.

Greg Brockman, co-founder and CTO; Ilya Sutskever, co-founder and chief scientist; and Dario Amodei, research director.

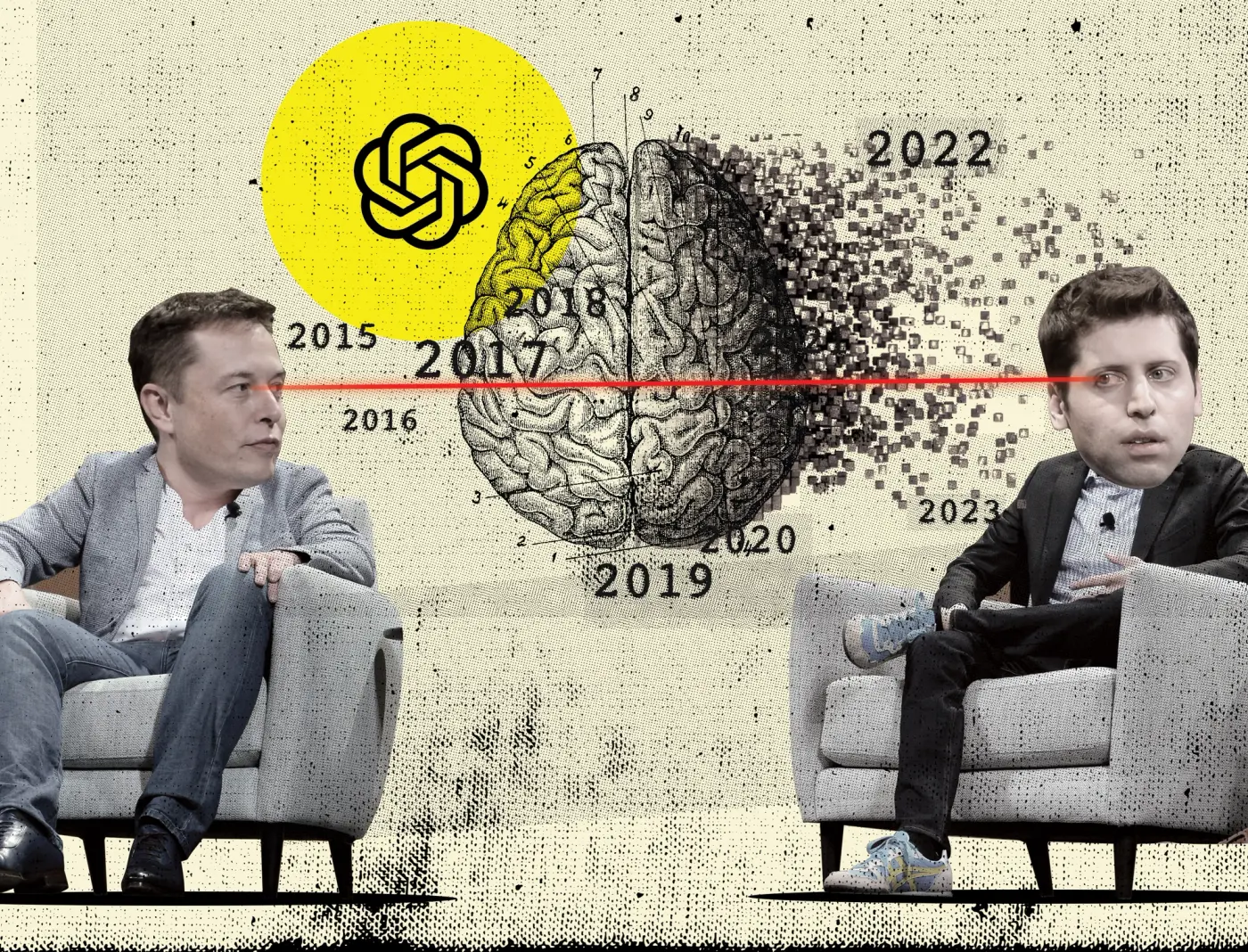

Musk & Altman, friends to rivals

Musk & Altman, friends to rivals